The March of Nines

I’ve been going back and forth on this one for a while. Will AI replace software? Will it replace white-collar work? I’ve written pieces that lean pretty hard into yes, and faster than you think. I still think that’s partly right. But I keep finding really smart people who’ve been deep in AI for a long time, and they’re landing somewhere more nuanced than the “software is dead” crowd.

I’m not that smart, so when I’m trying to figure something out, I look for people who are smarter than me and have been thinking about the problem longer.

Two people have shifted how I’m thinking about this.

Karpathy and the Demo Problem

Andrej Karpathy is one of the few people on Earth who has the resume to have a strong opinion here. He was one of the early people at OpenAI. He ran Tesla’s Full Self-Driving project. He’s been in AI for 15 years. He coined the term “vibe coding.” This is not a skeptic.

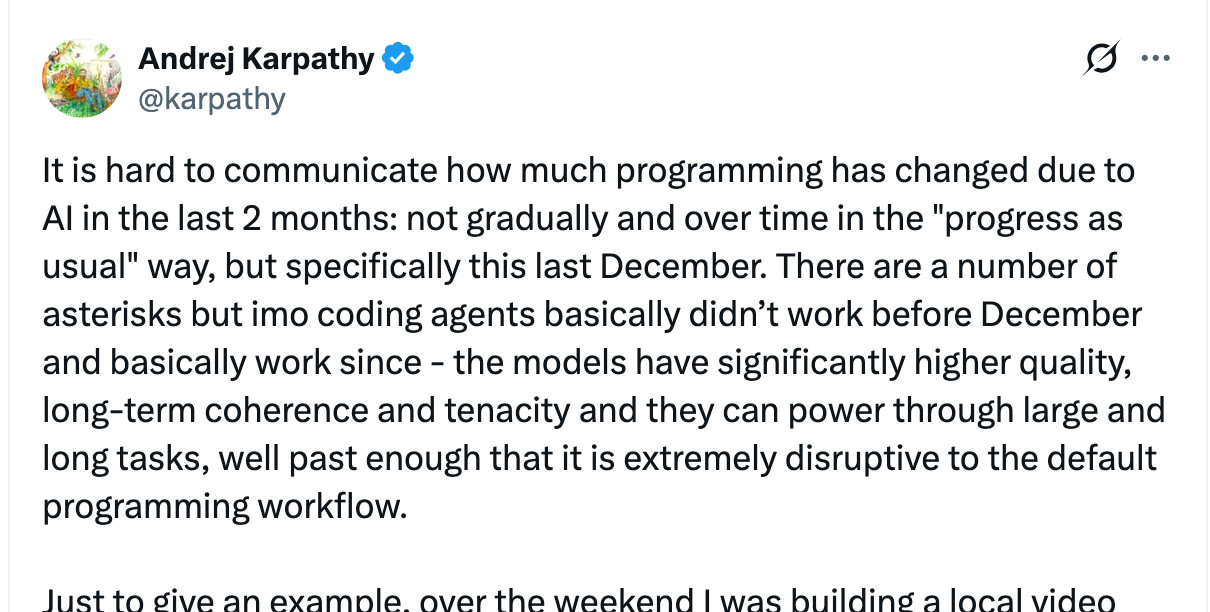

In fact, he’s one of the most excited people on the planet about what agents can do right now. In February he posted that programming has become “unrecognizable” in just two months — that coding agents basically didn’t work before December 2025 and basically work since.

When someone pushed back and said they weren’t seeing better results on production code — the hard stuff like UI, networking, concurrency — Karpathy’s response was “very possible you’re holding it wrong.” He’s a believer. He’s using these tools every day and he’s blown away by them.

And this same guy — the one telling you agents are transformative right now — is also making an argument I can’t stop thinking about. He calls it the march of nines.

“What takes the long amount of time and the way to think about it is that it’s a march of nines. Every single nine is a constant amount of work. When you get a demo and something works 90% of the time, that’s just the first nine. Then you need the second nine, a third nine, a fourth nine, a fifth nine.”

Each nine takes the same amount of work as the last one. Getting from 90% to 99% is the same effort as getting from 99% to 99.9%. And production software — the kind where a bug means Social Security numbers get leaked — needs a lot of nines.

He knows this because he lived it with self-driving. He rode in a Waymo back in 2014 and thought it was basically done. The demo was perfect. The car drove itself, no issues. That was twelve years ago. Self-driving still isn’t done. He recently said it’s “not even near done” when you mean the full vision — streets filled with autonomous vehicles, reclaiming space from parking lots, reshaping cities. He’s “unreasonably excited” about the eventual impact, but the edge cases and scaling are still a decade-scale march.

“I’m very unimpressed by demos. Whenever I see demos of anything, I’m extremely unimpressed by that. If it’s a demo that someone cooked up as a showing, it’s worse. If you can interact with it, it’s a bit better. But even then, you’re not done.”

That’s the thing that makes his argument so compelling. He’s not dismissing AI tools. He’s more excited about them than almost anyone. But he’s watched this movie before with self-driving — where a perfect demo in 2014 led to a decade of grinding through edge cases. The magical first 90% draws the hype. The critical last 10% separates demos from products.

I use Claude Code every day. I’m genuinely blown away by it. But I’ve also spent enough time with it to know that last 10% is where things get hard. Edge cases. Weird interactions. Things that work perfectly in one context and break in another. The kind of stuff that takes years of accumulated knowledge to get right.

Karpathy made another point that stuck with me:

“If you automate 99% of a job, that last 1% the human has to do is incredibly valuable because it’s bottlenecking everything else.”

Think about that for a second. The better AI gets at handling 99% of the work, the more valuable the human becomes for that remaining 1%. Not less valuable. More.

That’s counterintuitive. The default assumption is that AI doing 99% of the work means humans are 99% replaceable. But the 1% that requires human judgment — the edge case the model hasn’t seen, the context that doesn’t fit the training data, the decision that requires actual understanding of the business — that becomes the bottleneck. And bottlenecks are expensive.

“Self-driving cars are nowhere near done still. The deployments are pretty minimal. Even Waymo and so on has very few cars… there are very elaborate teleoperation centers of people kind of in a loop with these cars. In some sense, we haven’t actually removed the person, we’ve moved them to somewhere where you can’t see them.”

We didn’t remove the human from self-driving. We just moved them to a room you can’t see. I think the same thing is going to happen with a lot of “AI-automated” software.

Gurley: Trust Doesn’t Get Vibe-Coded

Bill Gurley made a different version of the same argument recently. Ben Thompson asked him point blank: is software dead?

“I doubt it. I think it’s really unlikely, and someone highlighted all the companies Anthropic’s actually paying seat licenses for, the notion that you would build your own ledger for financials is really stupid because the auditors have to come in and touch the thing and there needs to be systematic equivalents with other companies and there’d be no reason for everyone to be on their own bespoke version of that. It would make the auditor’s job ridiculously hard.”

This is the part that the “AI replaces everything” crowd misses entirely. It’s not just about whether the code works. It’s about whether the system around the code works. Auditors need to touch it. Regulators need to understand it. Other companies need to interoperate with it. There’s an entire ecosystem of institutional trust that has nothing to do with how good the code is.

You’re not going to vibe-code your accounting system. Not because AI can’t write the code — it probably can. But because your auditor won’t sign off on something some agent spun up last Tuesday. Because your bank needs your financial data in a format they recognize. Because the IRS has expectations about how records are kept. The trust lives outside the code.

Want proof? Workday’s stock has dropped 40% this year on the narrative that AI kills software. And then on an earnings call last week, their CEO mentioned that Anthropic, Google, and OpenAI all run Workday. The companies building the AI that’s supposedly going to replace enterprise software are paying for enterprise software. They have the best AI engineers on the planet, and they’re using Workday for payroll.

Where These Two Arguments Meet

Karpathy is talking about technical reliability. The code itself needs to work at five or six nines. The march of nines is a technical problem, and every nine takes the same amount of work as the last.

Gurley is talking about institutional trust. Even if the code is perfect, the systems around it — auditors, regulators, the need for companies to interoperate — mean you can’t just replace Workday with something an agent built in an afternoon.

These are two different layers of the same moat. And companies like Workday, Shopify, Visa, Mastercard — they’ve spent years building both. Years of edge cases found and fixed. Years of regulatory compliance. Years of integrations with other systems. Years of earning the trust that makes institutions willing to put their data in there.

A vibe-coded alternative might work 90% as well on day one. That sounds impressive until you remember that the distance from 90% to 99.999% is where all the value lives.

I want to be honest about what this argument doesn’t cover. A lot of software doesn’t need five nines. Internal tools, personal dashboards, one-off automations — that stuff is getting eaten alive by agents right now, and it should be. Karpathy built a custom cardio dashboard in 30 minutes with English instructions. That’s real. For anything where “mostly works” is good enough, the game has already changed.

But that’s not Workday. That’s not Visa’s payment network. That’s not the software where a bug means an auditor flags your entire company. The market is selling off the latter like it’s the former.

Mr. Market Is Overreacting

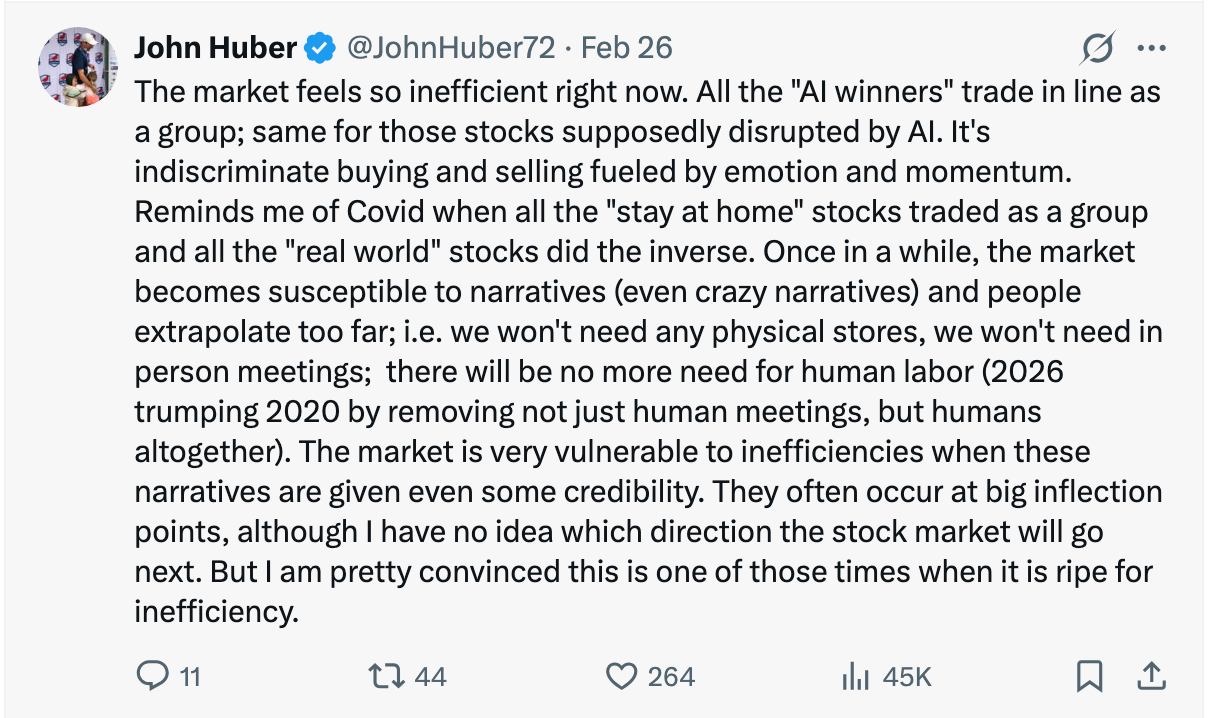

John Huber nailed it:

The market is treating every software company like it’s about to be replaced. Workday, Shopify, Visa — selling off together, as a group, because the narrative says “AI kills software.” It reminds me of COVID when all the “stay at home” stocks traded as a group and all the “real world” stocks traded as a group. The market does this. It’s indiscriminate. And that creates opportunity.

AI is great at generating the first nine. The companies being sold off have spent decades earning the rest of them.

I wrote recently that distribution beats product. That the companies with existing customer relationships capture the value. I still think that’s right. But there’s another layer. These companies don’t just have distribution. They have institutional trust. They have the accumulated knowledge of every edge case, every regulatory requirement, every integration. That’s not something you can demo your way past.

I keep going back and forth on AI. I’ve written about how the speed of forgetting catches everyone off guard. I’ve written about how edges are dissolving and nobody knows where to hide. I think those things are still true.

But I also think the market right now is confusing “AI can build a demo” with “AI can replace production software.” Those are very different things. The distance between them is the march of nines. And the person most excited about AI coding tools on the planet — the guy who coined vibe coding, who says programming is “unrecognizable,” who tells people they’re “holding it wrong” if they’re not seeing gains — that same person watched self-driving go from a perfect demo in 2014 to still-not-done in 2026. He thinks the distance is enormous. I’m inclined to listen.

If you’ve sold anything in your life, you know that trust is the hardest thing to build. That’s what a brand is. Trust. And trust doesn’t get vibe-coded.